The : A Full Investment/Deal Workflow

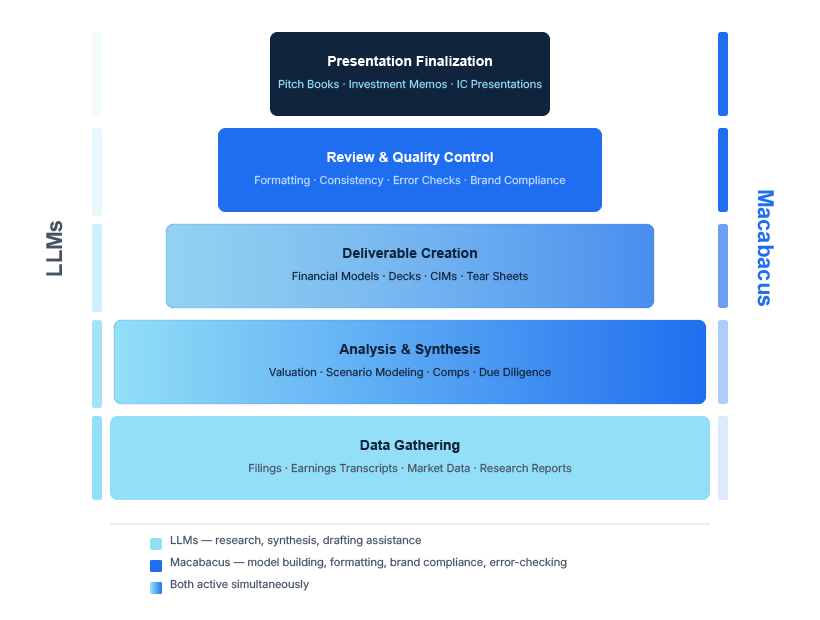

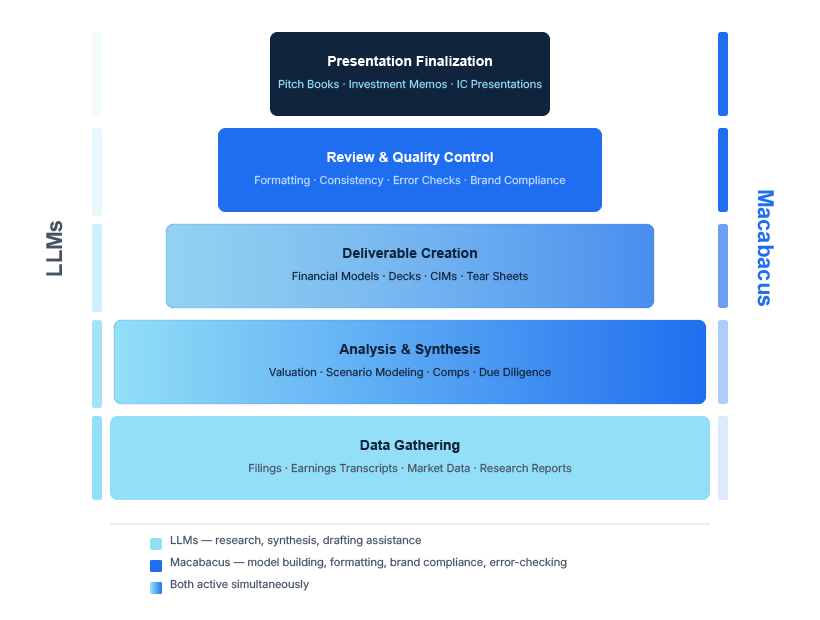

The investment decision or deal workflow, whether buyside, sellside, FP&A, or consulting project, follows a consistent shape. It starts wide and gets progressively more precise. Data is gathered, synthesized, modeled, turned into a deliverable, reviewed, and presented. At each stage, the nature of the task changes, and so does the right mix of tools.

The base is broad: market research, company backgrounds, news, filings, comparable transactions. The middle layers are synthesis, financial modeling, and building the actual deliverable. The top is review, finalization, and presentation to the client, board, or investment committee.

AI currently has the most inputs at the start of the workflow. Macabacus is most essential during synthesis, content creation, and the review cycle. At several stages in between, both are running at the same time.

Stage 1: Data Gathering

At the base of the workflow, LLMs have the clearest and most immediate impact: pulling background on a company, summarizing recent filings, gathering market data across sources, drafting a first read on a sector. Work that used to take a junior analyst most of a day can now take an hour. That compression frees up time for the work that actually requires judgment.

The caveat is that speed here does not eliminate verification. AI can misread a filing, hallucinate a revenue figure, or miss a material disclosure. The starting point is faster; the check is still required. AI doesn’t remove the need for a careful analyst, but it does remove the hours of manual assembly that precede the analysis, so analysts and associates can spend more time on the part that matters.

Stage 2: Synthesis and Modeling

As work moves from raw data into synthesis and financial modeling, the task shifts from gathering to precision. LLMs can still contribute: structuring a narrative, drafting commentary, helping frame what the numbers mean. But the modeling work itself requires something more reliable. A model that is probably right is not good enough when used to support the merits of a $300 million acquisition.

This is where Macabacus is essential. When you change a key input, do the numbers tie? When the data updates, do the charts and slides reflect it automatically? These things can break in a live deal process, but deterministic tooling handles them. You can’t send a model to a client and hope the formulas held.

This is also the stage where both tools are most likely to be running in parallel. An analyst can refine the narrative framing of a presentation with an AI chatbot (or with ’ AI Writing Assistant), while simultaneously managing the underlying model in Macabacus.

Stage 3: Building the Deliverable

By the time a deal team is turning analysis into a client-ready deck, tolerance for error is effectively zero. Numbers need to tie. Slides need to meet firm standards. Charts need to be on-brand. A deck that comes back from a senior banker or compliance is a delay with a cost, and it happens more than it should. Macabacus eliminates that category of problem rather than reducing it. Templated slide construction, on-brand chart formatting, and consistent layouts are built in, not checked after the fact.

LLMs still contribute at this stage for drafting language and refining talking points. But the structural work belongs to tooling that does not leave room for interpretation. And there is a straightforward cost consideration: formatting a deck correctly does not always require tokens. Running every formatting task through a language model would be expensive and would introduce variance into work that has a single correct answer. Deck Check handles those checks without going to a language model at all.

Stage 4: Review and Finalization

Human review is necessary but not sufficient. Reviewers miss things, especially on the tenth pass of a document they have been looking at for three days. Formatting checks catch structural issues but not contextual ones. A chart can be on-brand and directly contradict the text on the same slide. The IRR in the executive summary could say 18% while the model output on slide 12 says 16.4%, and nobody catches it until the client does.

This is the specific problem Deck Check AIR (AI Review) is built to address. It uses purpose-built AI to catch contextual errors and miscalculations, which formatting tools aren’t built to address, and where a human reviewer is most likely to miss something.

These AI features are targeted fixes for a well-defined gap. For example, you do not need AI Review for most formatting tasks, but do need it to catch logical inconsistencies and cross-slide contextual mismatches. Macabacus applies AI where the task genuinely requires it, and does not apply it where a more reliable approach exists.

By the time the work reaches a client or stakeholder, it carries the credibility of everyone who touched it. AI has not changed who owns the output or what it means when something is wrong. The number is either right or it is not, and the responsibility sits with the team.

What AI has changed is how the work reaches this stage, and in some cases how quickly. Getting to a first draft faster is valuable. But the confidence you carry into the room is a direct function of a thorough review process that comes afterward.

?

Many analysts have started their careers with these tools and seen how fast the capabilities are improving. If AI keeps getting better, why build a workflow that uses non-AI tools at all? Why introduce another tool if you are already paying for Claude, ChatGPT or Copilot?

The answer is that, though they can be embedded in other software, LLMs as a whole are not built to live in Excel and PowerPoint the way Macabacus is. They can draft, summarize, and assist with language and reasoning. They cannot refresh your PowerPoint from a database pull, or enforce your firm’s brand standards on every chart. Those are not gaps that a more capable LLM will fully close, because they are not purely content-creation problems. They are precision problems, and they require a tool built specifically for that environment.

Macabacus continues to add AI capabilities where they genuinely help, like our AI-driven enhancement to Deck Check. But the work still lives in M365. Models need to be carefully constructed, audited, and polished. Slides still need to tie to the numbers. This will hold true well into the future, and the tools that facilitate that work are not optional.

The enthusiasm around AI adoption often overlooks its costs. Routing every task through a large language model, including tasks with a single correct answer, pays a premium for probability (via LLM tokens) when determinism is expected, available, and cheaper. For example, slide formatting can be verified and enforced without a single AI token. Many model errors can be identified without calling an MCP. Sometimes the correct tool is the one built to be right, not the one built to be fluent.